That infinite space “between” what we humans feel as time is where computers spend all their time. It’s an entirely different timescale. A great table that illustrates just how enormous these time differentials are. Just translate computer time into arbitrary seconds:

A great table that illustrates just how enormous these time differentials are. Just translate computer time into arbitrary seconds:

| 1 CPU cycle | 0.3 ns | 1 s |

| Level 1 cache access | 0.9 ns | 3 s |

| Level 2 cache access | 2.8 ns | 9 s |

| Level 3 cache access | 12.9 ns | 43 s |

| Main memory access | 120 ns | 6 min |

| Solid-state disk I/O | 50-150 μs | 2-6 days |

| Rotational disk I/O | 1-10 ms | 1-12 months |

| Internet: SF to NYC | 40 ms | 4 years |

| Internet: SF to UK | 81 ms | 8 years |

| Internet: SF to Australia | 183 ms | 19 years |

| OS virtualization reboot | 4 s | 423 years |

| SCSI command time-out | 30 s | 3000 years |

| Hardware virtualization reboot | 40 s | 4000 years |

| Physical system reboot | 5 m | 32 millenia |

Approximate timing for various operations on a typical PC:

| execute typical instruction | 1/1,000,000,000 sec = 1 nanosec |

| fetch from L1 cache memory | 0.5 nanosec |

| branch misprediction | 5 nanosec |

| fetch from L2 cache memory | 7 nanosec |

| Mutex lock/unlock | 25 nanosec |

| fetch from main memory | 100 nanosec |

| send 2K bytes over 1Gbps network | 20,000 nanosec |

| read 1MB sequentially from memory | 250,000 nanosec |

| fetch from new disk location (seek) | 8,000,000 nanosec |

| read 1MB sequentially from disk | 20,000,000 nanosec |

| send packet US to Europe and back | 150 milliseconds = 150,000,000 nanosec |

Latency is one thing, but it’s also worth considering the cost of that bandwidth.

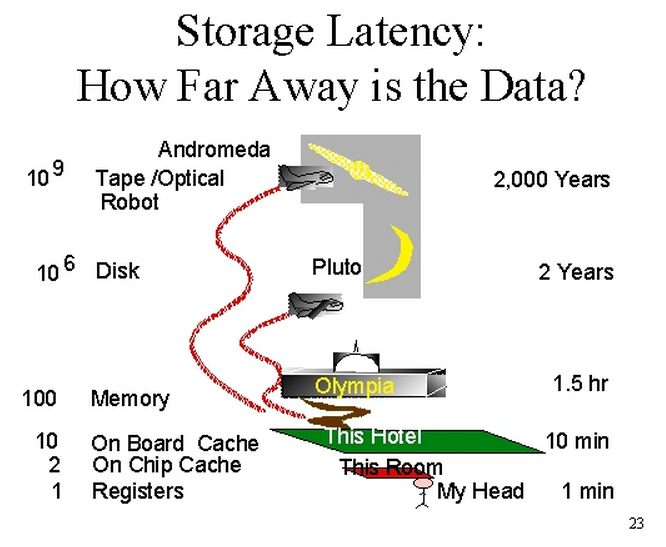

An interesting way of explaining this. If the CPU registers are how long it takes you to fetch data from your brain, then going to disk is the equivalent of fetching data from Pluto.

- Distance to Pluto: 4.67 billion miles.

- Latest fastest spinning HDD performance (49.7) versus latest fastest PCI Express SSD (506.8). That’s an improvement of 10x.

- New distance: 467 million miles.

- Distance to Jupiter: 500 million miles.

That’s disk performance over the last decade. How much faster did CPUs, memory, and networks get in the same time frame? Would a 10x or 100x improvement really make a dent in these vast infinite spaces in time that computers deal with?

To computers, we humans work on a completely different time scale, practically geologic time. Which is completely mind-bending. The faster computers get, the bigger this time disparity grows.